Every war produces lies. The Iran conflict has shown how much easier artificial intelligence has made the business of manufacturing them. Social media has been inundated with fabricated images, synthetic video, recycled clips, and staged “evidence” presented as breaking news from the battlefield. Some items were quickly exposed. Many were believed first and corrected later. By that stage, the damage had already been done. Reuters has had to debunk AI-generated images of a Saudi hotel supposedly set ablaze after an Iranian strike, a fake image and video of Ayatollah Ali Khamenei under rubble, and a fabricated missile barrage over Tel Aviv.

Fake content spreading quickly is one problem. Another is that the public is losing confidence in the very idea of visual proof. A dramatic image arrives and its credibility is immediately questioned. It could be real, recycled, doctored, or totally synthetic. In wartime, that uncertainty is a weapon in itself. It confuses viewers, blunts scrutiny, and gives propagandists a broader field in which to operate.

AI Fakes: Close Enough to Reality to Travel Fast

The most effective fakes are not absurd. They are persuasive because they resemble the sort of thing people already expect to see. Reuters released fact-checked reports on images depicting a Riyadh hotel in flames and Khamenei beneath rubble, revealing both were false. But they circulated widely and quickly because they matched the emotional climate of the conflict.

Fake videos are causing an even greater stir. A viral clip showing Tel Aviv being struck by an Iranian missile barge was also deemed to be AI-generated, according to three independent experts. And even when videos are found to be genuine, they may also have a misleading caption attached or reposted from previous conflicts. Another Reuters fact-check found that videos from June 2025 were presented as new footage and posted online in March 2026.

Ultimately, however, AI-generated war content or misleading re-posts do not need to survive forensic scrutiny forever. They simply need to dominate the first wave of attention, after which they’ve already had their intended emotional effect.

A Propaganda Machine Built for the Feed

Iran and its allies have been accused of exploiting this environment aggressively. On Sunday 15 March, Donald Trump said Iran is using AI to spread false evidence of wartime success, including fabricated depictions of attacks and inflated claims of public support. While online news outlets cannot verify some of the specific allegations, the wider concern about AI-assisted disinformation has now clearly entered the mainstream.

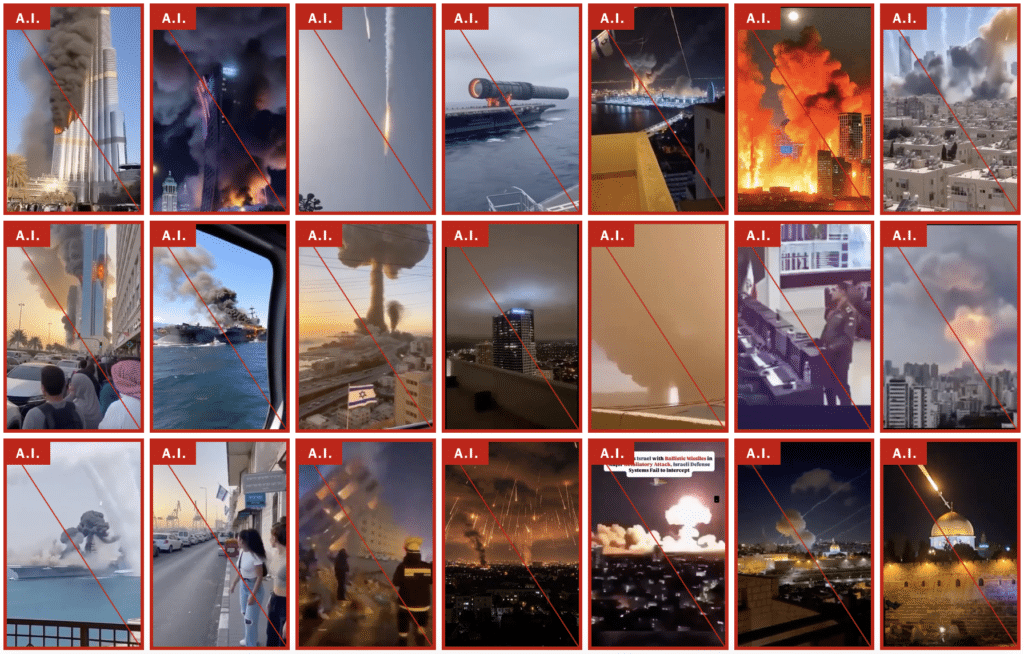

The New York Times published a gallery of more than 110 debunked AI videos and images from the past two weeks alone. They looked for “both obvious signs — such as depictions of buildings that do not exist, garbled text and behaviors or movements that defy expectations — and for invisible watermarks embedded within the files. The posts were also checked with multiple A.I. detector tools and compared with reports from news organizations.”

It’s “very different” to when the Ukraine war broke out, says Marc Owen Jones, an associate professor of media analytics at Northwestern University in Qatar, who says we’re seeing “far more AI-related content now than we ever have before”.

““The use of A.I. images of places in the Gulf — being burnt or damaged — becomes more important in Iran’s playbook,” Mr. Jones said, “because it allows them to give a sense that this war is more destructive and maybe more costly for America’s allies than it might actually be.”

The AI fakes identified by NY Times included:

- 37 fake images and videos falsely depicting active war

- 5 fake images and videos falsely depicting war preparation

- 8 fake images and videos falsely depicting destruction

- 5 fake images and videos falsely depicting crying soldiers

- 43 memes and overt uses of AI

- 13 other fake images and videos

How to Tell the Difference Between AI and Reality

According to the NY Times review:

Real footage of missile strikes was often shot from far away, typically at night, with missiles visible as little more than bright lights in the distance. Explosions in real videos are more often shown as plumes of smoke, not as fireballs, with bystanders rushing to film the scene only after the munitions meet their target.

Some A.I. videos and images, by contrast, have falsely depicted war like an over-the-top Hollywood action movie, with enormous explosions resulting in mushroom clouds, sonic booms that ripple across unnamed cities and supposed hypersonic missiles that leave glowing streaks in the sky. Real footage is sometimes enhanced by A.I. tools to make explosions appear larger and more devastating, further blurring the line between what is real and fake.

The A.I. footage has essentially created an alternate reality more suited to social media, experts said, where the exaggerated footage is more likely to find an audience.

In one of the most circulated fake videos found online, a shaky handheld scene seemingly shot from an apartment balcony in Tel Aviv shows the skyline pounded with missiles as an Israeli flag sits in the foreground. The video was viewed millions of times across platforms and was picked up by social media influencers and fringe news websites, according to a review of social media activity by The Times.

The Israeli flag in the foreground was one telltale sign that the video was A.I.-generated, experts said. To generate such videos, creators who use A.I. tools will typically write simple text instructions describing, for example, a shaky handheld video of a missile strike on Israel. The A.I. tools will then often include an Israeli flag or the Star of David to fulfill such a request. Several other A.I. videos included the flag.

Real Reporting Gets Dragged Down, Too

Once fake imagery becomes commonplace, genuine reporting pays the price as well. Authentic photographs and verified footage are now regularly dismissed as AI-generated by users who dislike what they imply or simply no longer trust what they see. That is a major strategic advantage for any regime or faction seeking to obscure responsibility.

This is one of the bleakest consequences of the new information environment. The lie does not merely compete with the truth. It degrades the status of truth altogether. A missile strike, a dead civilian, a destroyed building, or a rally crowd no longer enters public debate as a piece of evidence. It enters as a contested object in a polluted stream of competing claims. That favours states, movements, and activists who are perfectly content to turn reality into a blur.

The erosion of trust also weakens journalism itself. Verification takes time, access, and expertise. Generation takes seconds. The side that fabricates can move faster than the side trying to establish what actually happened. That asymmetry helps explain why the online conversation around war now feels less like reporting and more like a race between impression and correction.

Are Social Media Platforms Part of the Problem?

The surge in wartime AI fakery is not simply a failure of media literacy. It reflects the incentives built into the platforms themselves. Material that is dramatic, tribal, and emotionally charged is exactly the kind of content algorithms tend to reward. If a fabricated missile strike or fake battlefield image can generate outrage, fear, or triumph, it will travel quickly regardless of whether it is true.

Wired’s reporting on X makes that point clearly enough. Blue-check accounts pushed synthetic visuals tied to the conflict, while the platform’s own AI tools struggled to sort truth from fiction. The architecture of social media gives a structural advantage to content that feels urgent and shareable, and wartime deception is perfectly designed for that market.

That leaves ordinary users with an impossible burden. They are expected to exercise caution in an environment deliberately optimised to defeat caution. Governments speak vaguely about resilience and digital literacy, but the commercial model remains intact. Platforms profit from attention, and synthetic war content captures attention exceptionally well.

Suspicion is Now the Default Setting

For decades, the photograph and the video clip carried an assumption of evidentiary value. That assumption is now badly damaged. During this conflict, widely believed false visuals have shaped perceptions of momentum, retaliation, vulnerability, and legitimacy in a live war.

That leaves the public in a degraded information order where suspicion has become the default. And there does not seem to be any realistic alternative at present. A dramatic wartime image on X, Instagram, TikTok, or Telegram now requires verification before belief. That is a miserable standard for democratic societies, but perhaps necessary in the age of AI.

Final Thought

The digital battlefield now runs alongside the physical one, and it is doing profound damage to public trust. AI-generated images, fake videos, and repurposed clips are not merely distorting individual events. They are degrading the entire information environment in which war is understood. As visual evidence becomes permanently suspect, the viewer is left to navigate conflict through rumour and instinct, but is ultimately affected by whatever content reached them first.

How much of what appears in your feed during a war do you still believe without independent confirmation?

The Expose Urgently Needs Your Help…

Can you please help to keep the lights on with The Expose’s honest, reliable, powerful and truthful journalism?

Your Government & Big Tech organisations

try to silence & shut down The Expose.

So we need your help to ensure

we can continue to bring you the

facts the mainstream refuses to.

The government does not fund us

to publish lies and propaganda on their

behalf like the Mainstream Media.

Instead, we rely solely on your support. So

please support us in our efforts to bring

you honest, reliable, investigative journalism

today. It’s secure, quick and easy.

Please choose your preferred method below to show your support.

Categories: Did You Know?

🙏🙏

What the Holy Bible says of this horrific decade just ahead of us.. Here’s a site expounding current global events in the light of bible prophecy.. To understand more, pls visit 👇 https://bibleprophecyinaction.blogspot.com/

Just a couple of points regarding that website link: In the book of Daniel wasn’t he a captive of the Babylonian empire therefore doesn’t predict the rise of the Babylonian empire ? Also, the capital of Elam you say, was/is Susa, so Iraq not Iran.

People are getting hurt but not by who they say. The Iranian regime punishes Iranians if they post true pictures that it’s the Iranian regime blowing things up. The same goes for the Israeli regime. They suppress the truth of who drops these bombs and what causes the damages and death. There are real casualties but not from who they say.

“gives propagandists a broader field in which to operate.”

Nope. The field steadily narrows as it becomes obvious that nothing in any media can be trusted.

Sorry oligarchy, but the era of big propaganda is coming to an end.

Hi,

Are you suggesting that a solution to propaganda is to make every single piece of information untrustworthy? If so, wouldn’t that be an end to truth as well?

Regards,

G Calder

Nope. And I think you know better than that.

To distrust the “legacy” media is a logical position to take. They may be reporting truthfully but, on account of their shocking track-record of dishonesty, it makes sense to assume they are lying and build your knowledge from there. That’s just my view.

Propaganda is nothing new. If only we had been allowed to see that the insignia of Ukraines Azov battalion and Germany’s 2nd SS Das Reich are the same, perhaps we wouldn’t have wasted so many resources on the country that manned the concentration camps, and was killing ethnic Russians in the dombass…Putting didn’t illegally invade, he defended his people. Now that the UK and EUSSR are not helping the USA, the Russians, quite rightly annoyed, may well give Ukraine a good stomping and then steamroller the EUSSR..NATO is finished thanks to the pro islam Starmer and co. And if the gimmegrants can make it across the channel in Rubber dingys then the Russians will have no problem…yob vamadi Is the phrase…

we know the truth , we just need motivation !

project ( bluebird ) , project ( artichoke ) , ( MKUltra ) camp ( century ) operation ( paper clip ) ( looking glass ) , project ( Montauk ) project ( ice worm ) operation ( golden Lilly ) operation ( lockstep ) event 201 , scopex , crispr , operation ( crimson mist ) Hegelian Dialectic , chem trails , the act 1982 , operation ( mockingbird) operation ( cumulus ) project human 2.0 , project ( eden ) , nesara , operation ( haarp ) , operation ( north woods ) Rosalind Peterson , Galen Winsor , project ( slam dunk ) Fletcher Proutly , ivermectin

look at the shape of the construction of the dome in the EDAN project , faraday !

eden

Truth of war was fake so obvious.

1st. Ukraine war with Zalensky could travel in and out of Ukraine anytime. The war zone was predetermined only certain areas (not all of Ukraine). Planned & play war (video) game rather real war.

2nd. Russia was cahoots with this fakery.

3rd. Mass media reporting was biased and exaggerating in every news report.

Never trust mass media reporting.