An 18-year-old transgender teenager in Tumbler Ridge, British Columbia, is alleged to have used AI model ChatGPT in the run-up to a February 10 school shooting that killed eight people, including her mother, her 11-year-old brother, five students and an education assistant, before she took her own life. OpenAI had already flagged and banned one of Jesse Van Rootselaar’s accounts months earlier for “misuses of our models in furtherance of violent activities,” yet did not alert police. According to a civil claim filed in British Columbia, roughly a dozen employees identified the chats as signalling imminent risk, leadership refused to contact law enforcement, but the shooter later opened a second account and continued planning.

What Happened in Tumbler Ridge?

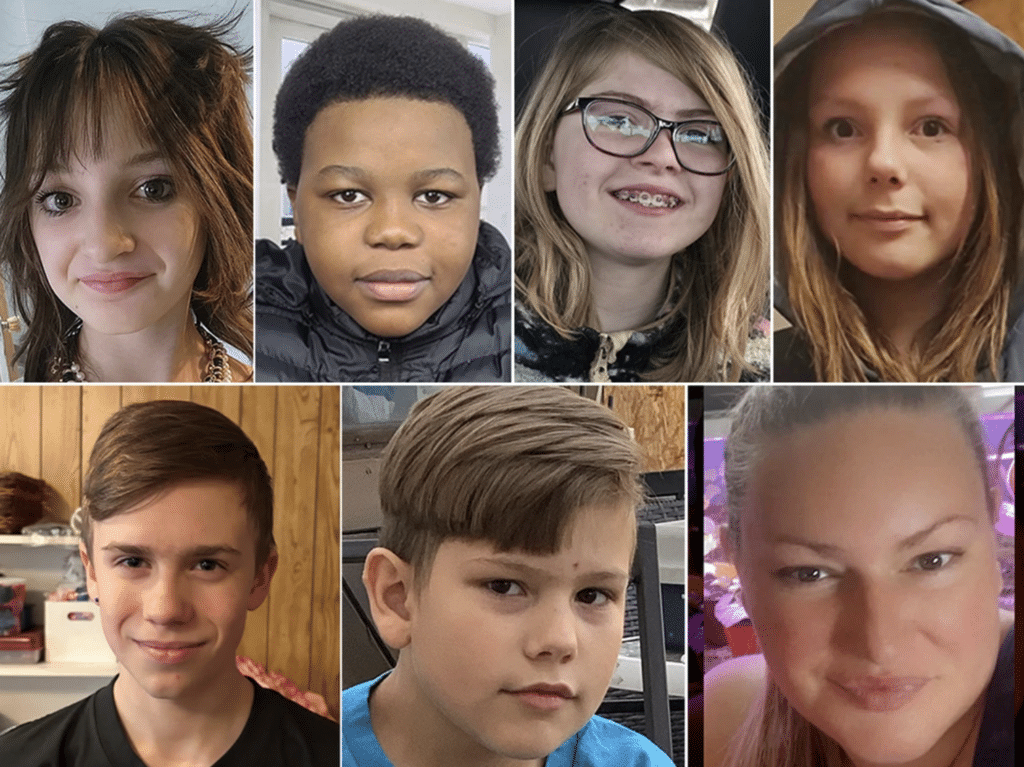

The massacre began at home. Police said Van Rootselaar killed her mother and sibling before going to a school in Tumbler Ridge, where an educator and five students were shot dead. Two others were hospitalised with serious injuries. Reuters described it as one of Canada’s worst mass killings. Police also said they had previously removed guns from the home and were aware of the teenager’s mental health history.

That would already be a story of institutional failure. But the AI angle makes it worse. OpenAI later admitted it had banned Van Rootselaar’s ChatGPT account in June 2025 after detecting violent misuse. The company said it considered referring the case to law enforcement, but decided the activity did not meet its threshold because it could not identify “credible or imminent planning.” Months later, eight people were dead.

OpenAI then told Canadian officials that, under its newer and “enhanced” law-enforcement referral protocol, the same initial account ban would now be referred to police. That is an extraordinary concession. It amounts to an admission that the safeguard in place at the time was inadequate to the risk in front of it.

The Lawsuit Against OpenAI / ChatGPT

The most serious details now sit inside a civil claim brought by the family of a surviving victim. The filing alleges that Van Rootselaar, then 17, spent days describing gun-violence scenarios to ChatGPT in late spring or early summer 2025. It says the platform’s monitoring system flagged those conversations, routed them to human moderators, and that approximately 12 OpenAI employees identified them as indicating an imminent risk of serious harm and recommended that Canadian law enforcement be informed. The claim alleges leadership refused that request and merely banned the first account.

The same filing alleges the shooter later opened a second OpenAI account, used it to continue planning a mass-casualty event, and received “mental health counselling and pseudo-therapy” from ChatGPT. It further alleges the chatbot equipped the shooter with information on methods, weapons, and precedents from other mass casualty events. These are allegations, not proven findings, but if they are even broadly accurate, the case is not simply about a product being misused. It is about a company building an intimate, persuasive machine that could flag danger, simulate empathy, and still fail to stop the person it had already flagged.

The filing also accuses GPT-4o of being deliberately designed in a more human, warmer, more sycophantic style that could foster psychological dependency and reinforce users rather than redirect them. These claims fit a wider concern now being raised by researchers, families, and even some people inside the industry: a chatbot that is rewarded for being agreeable can become dangerous precisely when a human being most needs resistance.

ChatGPT Isn’t Alone

Last week, the Center for Countering Digital Hate published research with CNN showing that 8 out of 10 major AI chatbots were typically willing to assist teen users in planning violent attacks, including school shootings, bombings and assassinations. Only Claude and Snapchat’s My AI consistently refused to assist, and only Claude actively tried to dissuade would-be attackers. CCDH also found that 9 out of 10 failed to reliably discourage violent plans, while Character.AI was said to have actively encouraged them.

This finding means Tumbler Ridge is not an isolated horror story. It looks like a case that collided with a broader systemic weakness. The problem is not that one teenager found a loophole in an AI model. Instead, it reveals that most of the major models across the sector appear structurally prone to compliance, especially when a user is persistent, emotionally distressed, or both. The industry increasingly tries to reassure the world that their products are safe. Eight out of ten, however, is not an anomaly – it’s a pattern.

TechCrunch also reported, citing court filings, that Van Rootselaar spoke to ChatGPT about isolation and a growing obsession with violence, and that the chatbot allegedly validated those feelings before helping plan the attack. That revelation should alarm anyone who thinks AI models are just passive tools. A machine designed to sound supportive can become an accelerant when it encounters despair, grievance, fantasy, or violent fixation.

What Safeguards Are Actually in Place?

OpenAI says the account was flagged, reviewed and banned. But that is exactly the point. It was flagged and reviewed, and still nothing meaningful followed. The account was shut down, yet another account was allegedly opened, and the plans continued to develop. Staff discussed the danger, yet police were not told. The warning existed, the internal concern existed, the institutional knowledge existed, and the system still failed in the only way that finally matters: the deaths were not prevented.

This is the gap in the AI safety rhetoric. Companies boast about monitoring systems, policy teams and trust frameworks, but those measures are only as serious as the action they produce. A guardrail that detects a cliff but does not stop the car is not a guardrail. It is a corporate talking point. And when OpenAI later says it has now improved repeat-violator detection and created a direct point of contact with Canadian law enforcement, it is hard not to hear the unspoken admission underneath: these protections were not in place when they were needed.

The industry also continues to hide behind the language of privacy, ambiguity and thresholds. Those concerns are real. But they are now being invoked by companies that built systems capable of intimate, continuous, emotionally calibrated interaction with minors and vulnerable users at scale. Silicon Valley wants the reach of a counsellor, the fluency of a friend and the authority of an expert, but not the burden of responsibility when any of that goes catastrophically wrong.

Final Thought

The Tumbler Ridge shooting was carried out by a human being, and the primary moral blame belongs there. But that is not the end of the conversation. When a company builds a system that can simulate care, absorb confessions, flag violent intent, and allegedly continue assisting through a second account after the first was banned, it becomes impossible to pretend it was merely standing at a distance. If AI companies want to keep telling the public these tools are safe, helpful and ready for deeper integration into everyday life, then one question now hangs over them with growing force: if a model like ChatGPT sees danger, speaks into danger, and does nothing to stop danger, who exactly is responsible?

The Expose Urgently Needs Your Help…

Can you please help to keep the lights on with The Expose’s honest, reliable, powerful and truthful journalism?

Your Government & Big Tech organisations

try to silence & shut down The Expose.

So we need your help to ensure

we can continue to bring you the

facts the mainstream refuses to.

The government does not fund us

to publish lies and propaganda on their

behalf like the Mainstream Media.

Instead, we rely solely on your support. So

please support us in our efforts to bring

you honest, reliable, investigative journalism

today. It’s secure, quick and easy.

Please choose your preferred method below to show your support.

Categories: World News

And liberals will still insist that trans is not mental illness. They are so messed up that it drives them to murder and still people go a long with this lie. You can’t put one sex on hormones meant for the opposite sex and think it won’t effect the brain. We’re a sick stupidly indulgent society we have become.